Bob Neves and a team of editors discuss the disconnect he sees between the reliability testing users are doing and what they encounter in practice, and why most reliability testing today should be considered durability testing.

Nolan Johnson: What are the latest developments in assembly reliability?

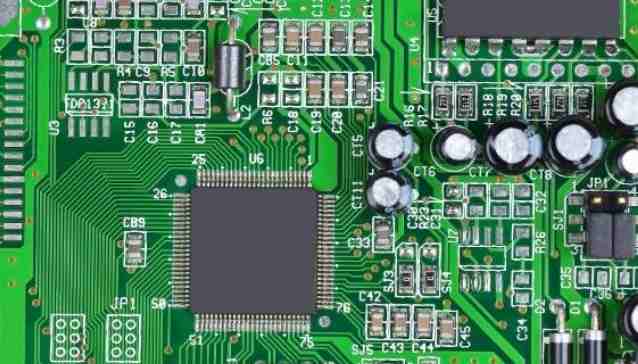

Bob Neves: My past experience and focus has been largely around PCBS in the electronics industry. Most of my clients are in the microelectronics field and mainly engaged in testing and evaluation. They are suppliers to PCB manufacturers, PCB manufacturers themselves, and PCB users. When we talk about testing, most people who buy PCBS see them as resistors or capacitors, just another component in their build list. To be honest, you can't really think of it as a component. You have to think of it as a very complex subsystem, just like when you buy a power supply or some other component that has multiple components, that can do more for you than a capacitor or resistor that can only provide a single function.

PCBS are used to transmit signals between components and to isolate signals at different locations. When there is a problem with an electronic component, these are the areas you tend to focus on first. Is the signal getting to the right place, or is there no signal where it should be? A lot of the signaling issues I've experienced have to do with some issues with interconnecting or isolating processes on PCBS. Engineers say to me, "If I hold this component down, it works," or, "If I heat it with a hot air gun, my system works. But if I take the hot air gun away, it doesn't work anymore." Many of the failures that occur in this situation end up being PCB related.

For electronic products, reliability problems begin to appear after the component mounting process is completed. You put all the components on a blank circuit board, you change the problem areas, you test the changes, and once that's done, you're ready to put the board into practice, and that's when you start thinking about reliability. If you're doing reliability testing, whether it's on a blank PCB, on a component, or on the entire component, you need to run some kind of simulation to show that it's finished mounting the component and making sure it's reliable before it leaves the factory. Not everybody can do that without doing a reliability test, and when a component comes in, a lot of people just take one out of it and test it on that component, or they'll do an electrical test on the PCB before the component is attached to it and say, "Everything's fine." However, your goal is to see how long the product will last during the test, and no matter what type of test you are doing, you will need to do some type of welding process simulation before the test. The simulation must also include rework and repair parts of the component mounting process.

The cost of knowing product reliability is very high because you must first understand where your product will be used, how it will be used, and in what environment it will be used. Once you have this information, you must find a way to do accelerated aging testing on the product in a reasonable amount of time. You can't take 10 years to see if your product has a 10-year lifespan. You have to find some way to achieve accelerated product life, and what you're going to do for accelerated product life testing has to be consistent with what the customer is going to do in the real world. It takes a lot of research, and looking individually at how each attribute will affect future use of your product, and how to accelerate development in a controlled way, without adding or reducing the factors that could cause the product to fail. Whether it's accelerated environment testing, mechanical acceleration testing, or all the other tests that have strange effects on your product, how these factors can be accelerated in some way can give you a visual idea of how "real-world application scenarios" affect the life of your product.

Most people don't do that. Most people are willing to do more durability types of testing. Some of the things they did were worse than the customer intended. They don't really know what the actual conditions of the product are in the actual application scenario, so they will add more heating, cooling, number of cycles or vibration during testing to try to influence the life of the product and put strict requirements on the product. They do some type of accelerated testing that may or may not be relevant to the actual application scenario of the product. I get this all the time. Some people say, "I'm going to do this 500-1,000 times." It reminds me of American Tourister and Samsonite commercials from the 1970s and 1980s in which they had a gorilla smash a suitcase against its cage to prove that it was sturdy. The gorilla kept pounding around the cage. If the case isn't cracked or cracked, it's proven to be reliable -- a true test of durability.

Unfortunately, a lot of the testing that's being done is this gorilla durability test. They say, "If the product can withstand the Gorilla test, it will meet the customer's requirements." This has been an attitude at various stages, and it is generally cheaper and easier to do. People have some confidence in that. This disconnect between what you do in testing and what happens in a user's real-world application scenario is why most "reliability testing" is now durability testing. Durability testing is simple and inexpensive to do, or they copy what competitors do or use industry standards. However, they do not understand the relationship between the tests they do and how their products appear in real-world application scenarios.